In a vSphere environment I am working on we use VMware vShield Edge to do firewalling, NAT and terminate VPNs for customers.

On several occasions we where not able to make config changes to some of our VSE devices when we tried to publish the changes we made from within vShield Manager. Whenever we tried to publish the changes, we received an error message in vShield Manager it could not reach the vShield Edge device we where trying to configure.

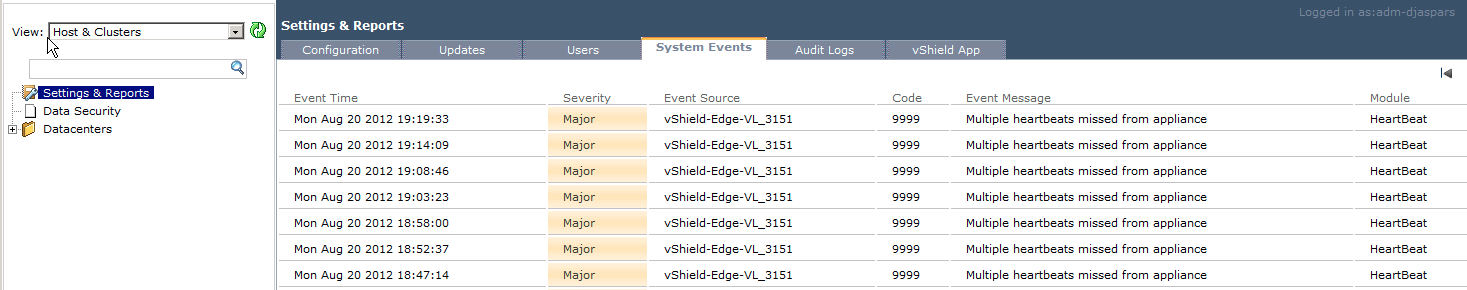

Next to that, we noticed a lot off errors in the vShield Manager System Events tab for this specific Edge Device regarding “Multiple heartbeats missed from appliance”

An other thing we noticed was the VMware Tools for this specific VSE device did not seem to be running.

We decided to open a case at VMware and where told this is a know issue with the version of vShield we are running (5.0.1) and this will be fixed in a future version. (It is not fixed in version 5.0.2 that was released recently)

The problems we see are caused by the fact the VSE device only uses a single filesystem, and this can cause the root filesystem to fill up when /var/log fills up. When that happens, you will see the issues as described above.

In the next version of vShield this will be addressed by using a seperate filesystem for /var (or /var/log) so when your log dir filles up, your root filesystem will not.

To confirm this is causing your issues, log in to the faulty VSE device via the console as admin user, and issue the command “show system storage”

As you can see in the example above, the root filesystem is full, which causes the issues as described above.

There are a couple of ways to fix this.

The official way is to open a case at VMware, and refer to their internal KB article 2032017 which describes this issue and what VMware needs to do to solve this. They can log in to the VSE device that has this problem to truncate the messages files in /var/log.

This solution is without any downtime.

If you need/want to fix this yourself, and you can afford downtime on the VSE device, you could power it off, mount its disk in a Linux VM, truncate the log files on the mounted disk, remove the disk from the Linux VM, and power on the VSE device again.

An other option that could work is to simply remove the faulty VSE device with the vSphere client. Whenever the vShield Manager syncs again with vCener, it will notice the missing VSE device and recreate it. This will also cause downtime. I was told by VMware this would be a solution that could work without involving VMware, but I have not confirmed this myself.

Excellent post, Duco!

I had exactly the same issue with vShield Manager and got it resolved with help from VMware.

They deleted some log files in:

/home/secureall/secureall/logs, /usr/tomcat/logs and /usr/tomcat/temp.

I have been playing with VSE console and discovered this command:

purge log (manager|system)

manager# purge log

manager Delete manager logs

system Delete system logs

Would be interesting to see if this command can truncate the logs and get vShield Manage back online.

Hi Duco,I know it’s 2 years on(and it’s 2am nearly here) but the purge logs will only gain you around 7 or 8MB in my experiences.

I’m on the phone to vmware now waiting for them to go in as root and clear logs so we can upgrade our VSM to 5.1.4

Listening to Chopin at the moment waiting for next call center to come online with an EE who knows the root p/w :’-(

Thanks Duco!!!!

You’re welcome Richard, and thanks for visiting my Blog, it’s a small world 🙂